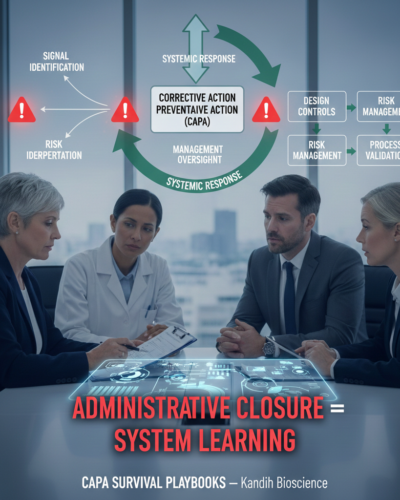

FDA Expects CAPAs to Feed Design Controls

The Complaint-to-CAPA Mapping Checklist

January 23, 2026

Why CAPA Without DHF Updates Fails

January 27, 2026

CAPA Survival Playbooks — Kandih Bioscience

From an inspection perspective, a CAPA that stops in QA without informing design inputs, risk management, or validation is incomplete. Administrative closure does not demonstrate learning or restored control; only changes to design and risk assumptions do.

When CAPAs Stop at QA, Inspections Don’t

One of the most reliable predictors of a CAPA-related inspection finding is isolation, not poor documentation.

Specifically: CAPAs that live and die inside QA—never touching design controls, risk management, or lifecycle decisions.

These CAPAs may be timely, complete, and formally “closed,” yet they routinely fail inspection scrutiny. Why? Because closure proves paperwork discipline, not system learning.

A persistent misconception drives this failure:

CAPA closure equals resolution.

From an inspection standpoint, closure only confirms administrative completion. It does not demonstrate that the product, process, or system learned anything. When CAPAs are treated as downstream cleanup instead of upstream correction, the same failure modes inevitably reappear—sometimes years later, sometimes in new products.

This is not theory. It is inspection reality.

What FDA Actually Expects (Regulatory Reality Check)

Inspectors from the U.S. Food and Drug Administration do not evaluate CAPAs in isolation. They evaluate whether CAPAs function as a risk-control feedback mechanism across the product lifecycle.

In practice, inspection logic follows a predictable path:

1. Signal Identification

Complaints, adverse events, deviations, audit findings, or supplier failures surface potential risk.

2. Risk Interpretation

The organization determines whether the issue reflects:

A design assumption failure

A process capability gap

A control breakdown

3. Systemic Response

CAPA actions are expected to extend beyond containment and inform:

Design inputs and outputs

Risk analyses and hazard controls

Verification and validation strategies

4. Management Oversight

Leadership reviews whether design and risk decisions remain valid given real-world performance.

Inspectors deliberately test these linkages. A complaint-driven CAPA leads to design history files. A deviation-related CAPA leads to risk management updates. A supplier CAPA raises questions about design reliance on external controls.

When CAPAs do not feed these systems, inspectors conclude that learning is not occurring.

Why FDA Does Not Treat CAPA as a QA Tool

QA may coordinate CAPA.

FDA evaluates CAPA as evidence of organizational learning and management control—especially where design assumptions meet operational reality.

A CAPA that does not challenge design is not corrective.

It is cosmetic.

Common CAPA Failure Modes When Design Is Bypassed

1. CAPAs That Stop at Containment

Actions focus on rework, retraining, or SOP updates without evaluating whether design intent was flawed.

Why FDA objects:

Containment without design feedback signals superficial correction.

2. Root Cause That Ignores Design Inputs

Execution errors are cited, but requirements, tolerances, or use conditions are never reassessed.

Why FDA objects:

Design controls must reflect real-world use. Protecting assumptions over patients signals unmanaged risk.

3. No Linkage to Risk Management Files

CAPAs close without updates to hazard analyses or failure mode evaluations.

Why FDA objects:

Risk documents that never change despite field data lose credibility.

4. Training-Only CAPAs for Design-Linked Failures

Human error is cited repeatedly where usability or interface complexity is evident.

Why FDA objects:

Training-only actions are interpreted as avoidance of design responsibility.

5. Effectiveness Checks That Ignore Design Performance

Verification focuses on task completion rather than whether design performance improved under real conditions.

Why FDA objects:

Effectiveness must show changed system behavior—not completed paperwork.

CAPA Closure vs. CAPA Effectiveness: Why Design Control Matters

Administrative closure answers:

Were the CAPA tasks completed?

Regulatory effectiveness answers:

Did the product and system learn?

Inspectors expect post-closure evidence such as:

Updated design or risk documentation

Verification or validation data showing improved performance

Trending demonstrating reduced recurrence

Cross-product or cross-version assessment

Time matters. Design-related CAPAs cannot be credibly closed without sufficient post-change observation.

Data matters. Effectiveness must tie back to the original failure mode.

When CAPAs do not influence design controls, effectiveness claims lack substance.

Business and Regulatory Consequences of Bypassing Design

Regulatory: CAPA-related Form 483s, repeat findings, escalated enforcement

Product lifecycle: Recurring issues across generations of the same product

Strategic: Loss of FDA confidence in proactive risk management

Financial: Remediation costs, delayed approvals, diligence risk

From a regulator’s standpoint, CAPAs that bypass design controls guarantee recurrence—because the system that created the failure remains unchanged.

Anonymized Inspection Scenario

Complaint data showed device misuse leading to performance failures.

A CAPA was opened. Staff retrained. Labeling clarified. CAPA closed.

When asked whether design inputs or usability testing were reassessed, the firm answered no. Similar complaints had occurred for years. Risk analysis was unchanged.

The resulting observation was not about training adequacy.

It was about failure to feed real-world data back into design controls.

Inspector Red Flags (Design Feedback)

Inspectors grow skeptical when they see:

Repeated CAPAs tied to the same design features

Risk management files that never change

Training used instead of design improvement

CAPAs closed without validation impact

Management review that never challenges design assumptions

These are signals of stalled learning.

What “Good” Looks Like to FDA

CAPA systems that hold up under inspection share clear traits:

Explicit design linkage documented in CAPA records

Risk integration when field data warrants updates

Cross-functional ownership (engineering, quality, operations)

Meaningful verification tied to real-world performance

Active management oversight that expects CAPAs to inform design

Many organizations use a simple internal prompt set:

Did this CAPA challenge a design assumption?

Were risk documents updated accordingly?

Was verification sufficient to show improved performance?

Could this issue exist in other products or versions?

Unanswered questions signal a broken feedback loop.

FDA expects CAPAs to do more than correct errors.

They are expected to teach the system—especially through design controls.

CAPAs that fail to feed design decisions signal unmanaged risk and stalled learning.

For organizations preparing for inspection, lifecycle transitions, or due diligence, a focused CAPA–design control gap analysis can surface broken feedback loops before regulators—or partners—do.

References

21 CFR §820.100 — Corrective and Preventive Action (Medical Devices)

Establishes CAPA as a system for analyzing quality data, investigating causes, implementing corrective action, and verifying effectiveness—explicitly linking CAPA to design, process, and quality system control.

https://www.ecfr.gov/current/title-21/chapter-I/subchapter-H/part-820/section-820.100

21 CFR §820.30 — Design Controls (Medical Devices)

Requires design inputs, outputs, verification, validation, and design changes to be controlled and documented in the Design History File (DHF). CAPA findings that implicate design must feed this system.

https://www.ecfr.gov/current/title-21/chapter-I/subchapter-H/part-820/section-820.30

21 CFR §820.198 — Complaint Files (Medical Devices)

Defines complaints as quality data that must be evaluated for failure modes and systemic issues, forming upstream inputs to CAPA and design reassessment.

https://www.ecfr.gov/current/title-21/chapter-I/subchapter-H/part-820/section-820.198

FDA Compliance Program Guidance Manual (CPGM) 7346.832 — QSIT

Describes how FDA investigators trace complaints, deviations, and failures through CAPA into design controls, risk management, and management responsibility.

https://www.fda.gov/media/75818/download

FDA Warning Letters (Public Database)

Repeatedly cite ineffective CAPAs where corrective actions did not result in design changes, risk updates, or systemic learning.